Information Security Vendors

A guide on using Monad to enhance detection and alerting systems.

Overview

This scenario-based guide is designed for IAM vendors to enhance detection and alerting systems by integrating Okta System Log and Google Workspace Admin Activity events. This example demonstrates what's possible with Monad.

Logs are transformed and routed through two pipelines to two S3 buckets—one for the detection logic engine and a second, ML_Datasets, for machine learning. Additionally, a Snowflake output is configured to monitor GWS group setting changes.

Outcomes

-

Comprehensive Detection: Enhanced visibility by adding two data sources into the detection engine to identify critical security events with greater precision.

-

Higher Fidelity Alerts: High-quality, noise-reduced alerts enable more effective threat responses and fewer false positives.

-

Improved Machine Learning: Routing all events to the ML_Datasets S3 bucket creates a valuable repository for training machine learning models, improving future detection capabilities.

-

Standardized and clean data output: By applying transformations, the data is standardized and cleaned, ensuring consistent formatting and making it easier to analyze, query, and integrate into downstream security systems or reports.

Tip: Note: This guide assumes the vendor has read access to their customers' Okta System Logs and Google Workspace Admin Activity logs.

Pipeline Setup

Pipeline 1: Okta to two S3 buckets

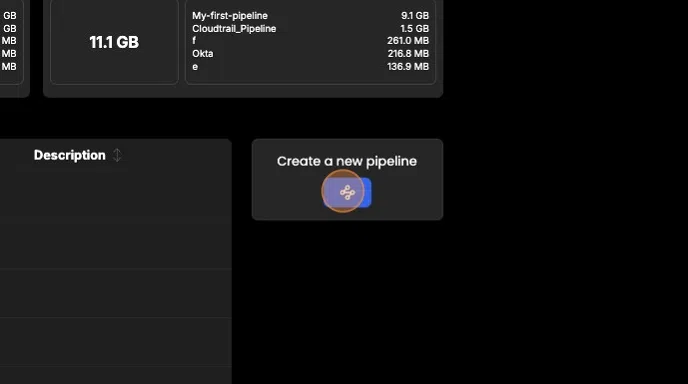

- Select Pipelines from the side panel and click "Create new pipeline."

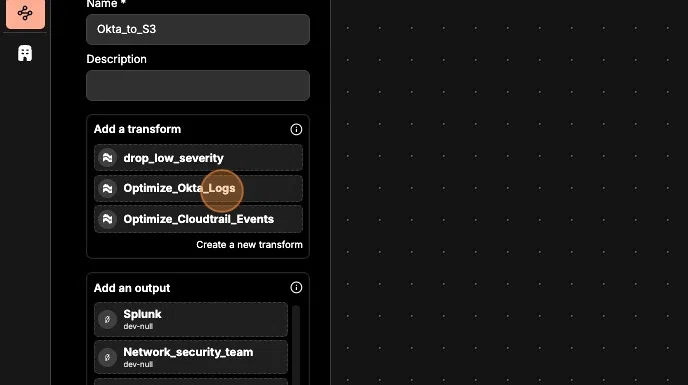

- Name this pipeline (e.g., "Okta_to_S3").

- Set Okta System Log as the input.

- Create the following outputs for Pipeline 1:

- Amazon S3 output for centralizing user activity logs (e.g., User_Activity_S3)

- Amazon S3 output for data to train machine learning models (e.g., ML_Dataset_S3)

- Save the configuration for Pipeline 1.

Pipeline 2: GWS to two S3 buckets and Snowflake

- Select Pipelines from the side panel and click "Create new pipeline."

- Name this pipeline (e.g., "GWS_to_S3_SF").

- Set Google Workspace Admin Activity as the input.

- Create the following outputs for Pipeline 2:

- Amazon S3 output for centralizing user activity logs (e.g., User_Activity_S3)

- Amazon S3 output for data to train machine learning models (e.g., ML_Dataset_S3)

- Snowflake output for capturing and monitoring changes to GWS group settings.

- Save the configuration for Pipeline 2.

Create and Apply Transformations

3.1 Optimize Okta Logs

- Navigate to Transforms and click "Create new transform."

- Name the transform (e.g., "Optimize_Okta_Logs").

- Add operations to clean and standardize:

- Rename

actor.alternateIdtouser_email. - Rename

client.ipAddresstoip. - Flatten nested fields like

client.geographicalContext.

- Rename

- Apply a timestamp transformation in ISO8601 format for consistency.

3.2 Optimize Google Workspace Logs

- Create a second transform, "Optimize_GWS_Admin_Logs."

- Add similar renaming and flattening operations:

- Rename

actor.emailtouser_email. - Rename

ipAddresstoip. - Flatten fields like

geoLocation.

- Rename

- Apply a timestamp transformation in ISO8601 format for consistency.

4. ### Apply Transforms to Pipelines

Pipeline 1: Okta to S3

- Navigate to Pipelines from the side panel.

- Click on "Okta_to_S3."

- Click "Configure" to enter configuration mode.

- Apply the "Optimize_Okta_Logs" transform to the pipeline:

- First, remove the default "Always" condition:

- Click on the "Always" icon and either press backspace or click the "Remove" button located in the top right corner of the pipeline builder page.

- First, remove the default "Always" condition:

- Drag the "Optimize_Okta_Logs" transform into the pipeline builder and place it between your input and output nodes.

- Drag the edges of each node to connect them, creating a connection between all three components: input, transform, and output.

- Save the updates in the top right corner of the window.

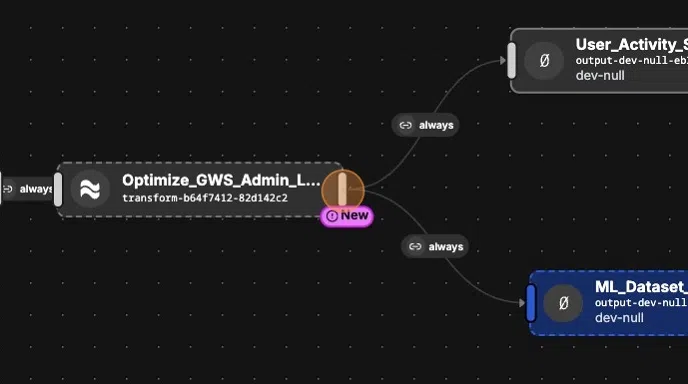

5. #### Pipeline 2: GWS to S3 and Snowflake

- Navigate to Pipelines from the side panel.

- Click on "GWS_to_S3_Snowflake."

- Click "Configure" to enter configuration mode.

- Apply the "Optimize_GWS_Admin_Logs" transform to the pipeline:

- First, remove the default "Always" condition:

- Click on the "Always" icon and either press backspace or click the "Remove" button located in the top right corner of the pipeline builder page.

- First, remove the default "Always" condition:

- Drag the "Optimize_GWS_Admin_Logs" transform into the pipeline builder and place it between your input and output nodes.

- Drag the edges of each node to connect them, creating a connection between all three components: input, transform, and output.

- Save the updates in the top right corner of the window.

6. Next, we will add a third output to Pipeline 2 (GWS) to route events related to group setting changes to a Snowflake output for deeper inspection.

- Drag the Snowflake output into the pipeline builder.

- Connect the edge of the "Optimize_GWS_Admin_Logs" transform to the Snowflake output by dragging the edge between the two nodes.

- Click the "Always" button on the connection and then select "Edit conditions" in the top right corner.

- In the Operator section, choose "And."

- Under Rules, select "Key has one of values."

- For the key field, type

"events.name". - For the value field, enter

"CHANGE_GROUP_SETTING".

- For the key field, type

- Click Update to save the condition.

- Finally, click Save in the top right corner to apply the routing changes.

Conclusion

By following this guide, you've successfully set up two pipelines that leverage both Okta System Log and Google Workspace Admin Activity data. Your setup now feeds critical data into your detection engine, builds machine learning datasets for future improvements, and sends GWS group setting changes to Snowflake for deeper inspection.

This streamlined approach ensures comprehensive detections, high-fidelity alerts, and enhanced monitoring of IAM posture—putting you in a stronger position to protect your customers.